User:Yan/Dev

Welcome, digital archivists, to Archive Team! Programmers, developers, engineers… You're here because the clown is going to delete your data—and you're going to stop that. So, who is ready to rescue some history?

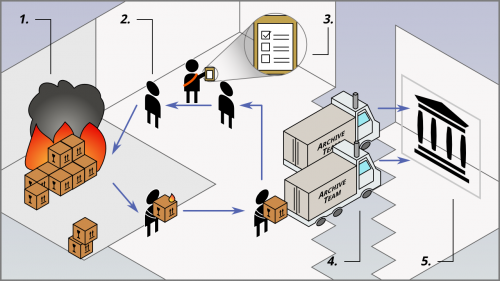

Infrastructure overview

The Archive Team infrastructure is a distributed web processing system used for distributed preservation of service attacks.

Component Overview

| Figure | Description |

|---|---|

| 1 | Website in Danger |

| 2 | Warrior |

| 3 | Tracker |

| 4 | Staging Server |

| 5 | Internet Archive |

Website in Danger

The website in danger is typically a website exhibiting combinations of

- acquihire

- mass layoffs

- neglect, decay, unhealthy, or owners missing in action

- political and legal issues

- robots.txt exclusion file that forbids crawling by Wayback Machine (whether intentionally or unintentionally)

- cultural significance

Warrior

The Warrior is client code run by volunteers that grabs/scrapes the content of the website in danger.

Websites often implement throttling systems to protect themselves for various reasons such as spam or server load. Typical systems use IP address bans. As such, many Warriors, running on many IP addresses, are needed.

Content is usually grabbed and saved in WARC files.

Tracker

The Tracker is server code run by "core" Archive Team volunteers. The Tracker assigns what the Warrior should download and provides a leaderboard.

Staging Server

Staging servers are typically servers running Rsync often run by "core" volunteers. Warriors upload WARC files to these hosts. The hosts queue and package up the WARC files into large WARC files (Megawarcs). Then, the Megawarcs are uploaded to the Internet Archive under the Archive Team collection.

Internet Archive

The Internet Archive is a digital library and archive. It is different from other hosting services because they are not a distribution platform. If there is an legal issue, items are "darked" (made inaccessible to the general public) instead of deleted.

Items are ingested by the Wayback Machine if it

- has warc.gz files,

- has a "web" media type,

- and is under the Archive Team collection.

Source code repositories

Fork me on GitHub! File and triage issues, fix bugs, refactor code, submit pull requests… all welcome! Discussion in #archiveteam-dev (on hackint).

The warrior uses the following repos:

Client code

Client code includes code that the Warrior executes.

- warrior3 - bootstrap and tools to build the image

- Bootstrap code that is pulled from GitHub by the appliance and starts a docker container

- archiveteam/warrior-dockerfile - the container

- Instructions to boostrap the docker container

- warrior2 - warrior runner code

- Main code that runs inside of the docker container

- seesaw-kit

- Library that helps build grab scripts, the web interface, and pipeline engine for the warrior. The name "seesaw" comes from its original behavior: download, upload, and repeat.

Projects

Projects are in separate repositories typically with the name -grab as a suffix.

Item lists that are loaded into the tracker are sometimes saved into a repo with -items as a suffix. Scripts to build searchable index HTML pages are usually suffixed with -index.

Server code

Server code includes code that the Tracker executes.

universal-tracker - Ruby

- The server of which the Seesaw contacts

warrior-hq - Ruby

- The server of which the warrior appliances contact for project metadata

archiveteam-megawarc-factory - shell

- The scripts that bundles the WARC files.

URLTeam code

URLTeam code is independent from the tracker and warrior.

Old:

- The client code that scrapes the shortlinks. It includes a pipeline shim to run the code.

- The server code for the tracker.

New:

- A pipeline shim to run the code.

- The code for both the client library and tracker.

Misc

- Dockerfile that runs the warrior inside a Docker container.

ArchiveBot - Ruby, Python, Lua

- An IRC bot for archiving websites.

wget-lua - C, Lua

- A patched version of Wget for web crawling.

standalone-readme-template - Markdown

- A template for readme files included in grab repositories.

archiveteam-dev-env - Shell

- Ubuntu preseed for a developer environment for ArchiveTeam projects.

wpull - Python

- A Wget-compatible web downloader/crawler.

Warrior overview

The Warrior is a virtual machine appliance used by volunteers to participate in projects.

Packages

The Warrior image (version 3, version 4) is built off Alpine Linux 3.6.2 (VM version 3.0), Alpine Linux 3.12.0 (VM version 3.1), Alpine Linux 3.13.2 (VM version 3.2 before automatic update), Alpine Linux 3.19.0 (VM version 3.2 after automatic update), Alpine Linux "latest-stable" (VM version 4.0):

- kernel 4.9.32 (VM version 3.0), kernel 5.4.43-1virt (VM version 3.1), kernel 5.10.16-0-virt (VM version 3.2 before automatic update), kernel 6.6.7-0-virt or later (VM version 3.2 after automatic update), kernel 6.1.39-0-virt (VM version 4.0)

- the Warrior 3.x virtual machine image is prepared using the

stage.shscript and contains a pre-installed/root/boot.shscript that downloads and boots the warrior. - Warrior 4 is prepared using a different method

The warrior itself runs in a Docker container running Debian 12 Bookworm that contains Python 3.9, NodeJS, wget-at, and numerous other dependencies.

Warrior 3.2 will automatically update itself from Alpine Linux version 3.13.2 to Alpine Linux version 3.19 upon boot in order to install a more compatible version of Docker that works with Debian Bookworm. The terminal login prompt will still display "Welcome to Alpine Linux 3.13" after this update, but you can check the kernel version or the contents of /etc/apk/repositories to see if this update has been applied.

Warrior VM versions 3.0 and 3.1 ship with a Docker container running Ubuntu 16.04 that contains

- Python 3.5.2, pip 8.1.1

- Perl v5.22.1

- gcc 5.4.0, make 4.1, bash 4.3.48

curl 7.47.0

VM versions 3.0 and 3.1 will automatically be updated use the new, updated container configuration the next time they are booted up and connected to the internet.

VM versions 3.0, 3.1, and 3.2-beta will automatically display an EOL message and reboot weekly the next time they are booted up and connected to the internet. Automatically updating these VMs does not appear to be possible, though contributions are welcomed. (Warrior 3.0 segfaults during the upgrade attempt. Warrior 3.1 and Warrior 3.2-beta also segfault during upgrade but that can be fixed by upgrading apk-tools to the latest version for Alpine Linux 3.12 beforehand. However, 3.1 and 3.2-beta still freeze on boot after the upgrade.)

Bootup

The virtual machine is self-updating. It does the following:

- Start the virtual machine

- Linux boots

boot.shdownloads and launches/root/startup.shstartup.shprepares and runs a Docker container with the warrior runner- Point your web browser to http://localhost:8001 and go.

On Warrior 3.2, boot.sh also checks repo_prefix.txt and branch.txt to determine if startup.sh should be downloaded from an alternate location. See the boot.sh source code for details including URL format.

Viewing container logs

Starting with VM version 3.2, you can view basic logs from the virtual machine console: press ALT+F2 for Warrior logs, press ALT+F3 for automatic updater logs, and press ALT+F1 to return to the splash screen.

Logging into the Warrior

To log into the warrior,

- Press Alt+F4 (make sure you capture your keyboard in the VM first or instead press Alt+Right several times).

- The username is

rootand the password isarchiveteam - You are now logged in as root.

- Check the docker container with

docker ps. This will give you docker container identifier, among others. If you are using VM version 3.2, there should be containers namedwarrior(the Warrior itself),watchtower(automatic updater, runs every hour), andinstantwtower(automatic updater, runs once on VM startup). VM versions 3.0 and 3.1 will automatically be updated use these new, named containers the next time they are booted up and connected to the internet. - Enter the inside of the docker container with

docker exec -it identifier /bin/bash

Testing Core Warrior Code

This part may be outdated and refer to Warrior version 2

Since the Warrior pulls from GitHub, it is important to commit only stable changes into the master branch. Recommended Git branching practices use a development branch.

To test core Warrior code, you can switch from the master branch to the development branch. The Warrior will fetch the corresponding seesaw-kit repository branch.

To change branches,

- Log in as root

- Execute

cd /home/warrior/warrior-code2 - Execute

sudo -u warrior git checkout development - Execute

reboot

By the same route you can return your warrior to the master branch.

The code for each project is stored in /home/warrior/projects/<PROJECTNAME>/

Starting a new project

Starting a new project is a giant leap into getting things done.

Remember that usability is very important in archives. Using API endpoints or webpages that are used internally by the website itself will make it easier to browse the archives. Check how playback in the Wayback Machine will look (most notably, support for anything except GET is flaky at best).

Website Structure

Take a good look at how the website is structured:

- Is everything hosted under one domain name?

- Is there a throttling system?

- How can I discover usernames or page IDs?

- Is there an API?

- Is there a sitemap.xml?

- Can I guess URLs by incrementing a value?

- Does disabling cookies or using specific cookies affect anything?

- Does the website break if you make special requests?

- Can you Google

site:example.comfor some URLs?- Hint:

site:example.com inurl:show_thread

- Hint:

- Is it a video? Try get-flash-videos

JavaScript

JavaScript is a pain.

- Check to see if there's a noscript or mobile version.

- Use a web inspector to observe its behavior and simulate POST requests made by the scripts. (This is often much easier than trying to read the JavaScript yourself.)

- Scrape URLs from JavaScript templates with regular expressions.

Static Assets

Websites sometimes do not host static media such as images and stylesheets under their primary domain name. Be sure to take those under consideration.

If there are a lot of assets, or these assets need to be deduplicated, you may want to queue them as separate items on the tracker side. To do this you can use the 'backfeed', which is just an API endpoint that allows you to queue items that are then deduplicated with the bloom filter.

IP Address Bans & Throttling

Find out if there is IP address banning. Use a sacrificial IP address if you need to. Many providers charge by the hour, allowing you to make such tests for a tiny cost.

Items

- See also: Dev/Seesaw#Quick Definitions

Once you determine the website structure, you need to determine how to split up work units up efficiently by an item name. An item name is a short string describing the work unit, for example, a username. Some projects have multiple potential things that an item could represent; these are most commonly done as type:value. For example, user:foo, or asset:bar.jpg.

Because the Tracker uses Redis as its database, memory usage is a concern. The maximum number of items supported ranges from 5,000,000 to 10,000,000 depending on the item name length. Keep in mind that the todo and done queues are offloaded to disk, so memory usage for those is not as much of a problem.

- If a user site is USERNAME.example.com, a good candidate is USERNAME.

- Be careful of large subdomain sites.

- If the content is by some numerical ID, consider whether ranges of IDs are appropriate.

Call for Action

- ProTip™: Get things done.

Wiki Page

Ensure there is documentation on this wiki about the project.

Include:

- an overview of the website

- the shutdown notice

- "how to help" instructions

- a (future) link to the archives

Writing Grab Scripts

If you do not have permissions to create Archive Team's repository, please ask on IRC. You can always create one on GitHub under your own user and it can be transferred later (though make sure that the project is okayed before you put in the effort!)

For detailed information about what goes inside grab scripts, take a look at writing Seesaw scripts.

Tracker Access

If you do not have permission to access the Tracker, please see Tracker#People.

IRC Channel

Archive Team uses per-project IRC channels to reduce noise in the main channel. It also serves as a technical support channel.

IRC channel names must be humorous.

- If an employee of the website in danger appears on the channel, please do cooperate.

Project Management

Successful projects are a result of successful management. See Project Management for details.

Getting Attention

Many Twitter followers? Got connections? Become a loudmouth!

Otherwise, take initiative yourself and encourage other team members to take initiative.

Writing Seesaw grab scripts

Writing a Seesaw content grab script is the most challenging and fun aspect of the infrastructure.

Note that much of this page is outdated. The best way to write scripts is to copy another Archive Team project's scripts and change them to your liking.

What a Archive Team Project Contains

Once the Git repository has been created, be sure to include the following files:

pipeline.py

- This file contains the Seesaw client code for the project.

README.{md,rst,txt}

- This file contains

- * brief information about the project

- * instructions on how to manually run the scripts

- * A template is available here: standalone-readme-template

[Project Name Here].lua (optional)

- This is the Lua script used by Wget-Lua.

warrior-install.sh (optional)

- This file is executed by the Warrior to install extra libraries needed by the project. Example: punchfork-grab warrior-install.sh.

wget-lua-warrior (optional)

- This executable is a build of Wget-Lua for the warrior environment.

get-wget-lua.sh (optional)

- Build scripts for Wget-Lua for those running scripts manually.

The repository is pulled in by the Warrior or manually be those who want to run the scripts manually.

Writing a pipeline.py (Seesaw Client)

The Seesaw client is a specific set of tasks that must be done within an item. Think of it as a template of instructions. Typically, the file is called pipeline.py. The pipeline file uses the Seesaw Library.

The pipeline file will typically use Wget with Lua scripting. Wget+Lua is a web crawler.

The Lua script is provided as an argument to Wget within the pipeline file. It controls fine grain operations within Wget such as rejecting unneeded URLs or adding more URLs as they are discovered.

The goal of the pipeline is to download, make WARC files, and upload them.

Quick Definitions

item

- a work unit

pipeline

- a series of tasks in an item

task

- a step in getting the item done

Installation

You will need:

- Python 2.6/2.7

- Lua

- Wget with Lua hooks

Typically, you can install these on Ubuntu by running:

sudo apt-get install build-essential lua5.1 liblua5.1-0-dev python python-setuptools python-dev openssl libssl-dev python-pip make libgnutls-dev zlib1g-dev sudo pip install seesaw

You will also need Wget with Lua. There is an Ubuntu PPA or you can build it yourself:

./get-wget-lua.sh

Grab a recent build script from here.

The pipeline file

The pipeline file typically includes:

- A line that checks the minimum seesaw version required

- Copy-and-pasted monkey patches if needed

- A routine to find Wget Lua

- A version number in the form of

YYYYMMDD.NN - Misc constants

- Custom Tasks:

- PrepareDirectories

- MoveFiles

- Project information saved into the

projectvariable - Instructions on how to deal with the item saved into the

pipelinevariable - An undeclared

downloadervariable which will be filled in by the Seesaw library

It is important to remember that each Task is a template on how to deal with each Item. Specific item variables should not be stored on a Task, but rather, it should be saved onto the item: item["my_data"] = "hello".

Minimum Seesaw Version Check

if StrictVersion(seesaw.__version__) < StrictVersion("0.0.15"):

raise Exception("This pipeline needs seesaw version 0.0.15 or higher.")

This check is used to prevent manual script users from using an obsolete version of Seesaw. The Warrior will always upgrade to the latest Seesaw if dictated in the Tracker's projects.json file.

Version 0.0.15 is the supported legacy version, but it is suggested to rely on the latest version of Seesaw as specified in the Seesaw Python Package Index.

Monkey Patches

Monkey patches such as AsyncPopenFixed are only provided for legacy versions of Seesaw.

Routine to find Wget-Lua

WGET_LUA = find_executable(

"Wget+Lua",

["GNU Wget 1.14.lua.20130523-9a5c"],

[

"./wget-lua",

"./wget-lua-warrior",

"./wget-lua-local",

"../wget-lua",

"../../wget-lua",

"/home/warrior/wget-lua",

"/usr/bin/wget-lua"

]

)

if not WGET_LUA:

raise Exception("No usable Wget+Lua found.")

This routine is a sanity check that aborts the script early if Wget+Lua has not been found. Omit this if needed.

Script Version

VERSION = "20131129.00"

This constant, to be used within pipeline, is sent to the Tracker and should be embedded within the WARC files. It is used for accounting purposes:

- Tracker admins can check the logs for faulty grab scripts and requeue the faulty items.

- Tracker admins can require the user to upgrade the scripts.

Always change the version whenever you make a non-cosmetic change. Note, this constant is only a variable. Be sure that it is used within pipeline.

Misc constants

USER_AGENT = "Mozilla/5.0 (Windows; U; Windows NT 6.1; en-US) AppleWebKit/533.20.25 (KHTML, like Gecko) Version/5.0.4 Safari/533.20.27 ArchiveTeam" TRACKER_ID = "posterous" TRACKER_HOST = "tracker.archiveteam.org"

Constants like USER_AGENT and TRACKER_HOST are good programming practices for clean coding.

Check IP address

This task checks the IP address to ensure the user is not behind a proxy or firewall. Sometimes websites are censored or the user is behind a captive portal (like a coffeeshop wifi) which will ruin results.

class CheckIP(SimpleTask):

def __init__(self):

SimpleTask.__init__(self, "CheckIP")

self._counter = 0

def process(self, item):

ip_str = socket.gethostbyname('example.com')

if ip_str not in ['1.2.3.4', '1.2.3.6']:

item.log_output('Got IP address: %s' % ip_str)

item.log_output(

'Are you behind a firewall/proxy? That is a big no-no!')

raise Exception(

'Are you behind a firewall/proxy? That is a big no-no!')

# Check only occasionally

if self._counter <= 0:

self._counter = 10

else:

self._counter -= 1

PrepareDirectories & MoveFiles

class PrepareDirectories(SimpleTask):

"""

A task that creates temporary directories and initializes filenames.

It initializes these directories, based on the previously set item_name:

item["item_dir"] = "%{data_dir}/%{item_name}"

item["warc_file_base"] = "%{warc_prefix}-%{item_name}-%{timestamp}"

These attributes are used in the following tasks, e.g., the Wget call.

* set warc_prefix to the project name.

* item["data_dir"] is set by the environment: it points to a working

directory reserved for this item.

* use item["item_dir"] for temporary files

"""

def __init__(self, warc_prefix):

SimpleTask.__init__(self, "PrepareDirectories")

self.warc_prefix = warc_prefix

def process(self, item):

item_name = item["item_name"]

dirname = "/".join(( item["data_dir"], item_name ))

if os.path.isdir(dirname):

shutil.rmtree(dirname)

os.makedirs(dirname)

item["item_dir"] = dirname

item["warc_file_base"] = "%s-%s-%s" % (self.warc_prefix, item_name, time.strftime("%Y%m%d-%H%M%S"))

open("%(item_dir)s/%(warc_file_base)s.warc.gz" % item, "w").close()

class MoveFiles(SimpleTask):

"""

After downloading, this task moves the warc file from the

item["item_dir"] directory to the item["data_dir"], and removes

the files in the item["item_dir"] directory.

"""

def __init__(self):

SimpleTask.__init__(self, "MoveFiles")

def process(self, item):

os.rename("%(item_dir)s/%(warc_file_base)s.warc.gz" % item,

"%(data_dir)s/%(warc_file_base)s.warc.gz" % item)

shutil.rmtree("%(item_dir)s" % item)

These tasks are "tradition" (meaning, they are copied-and-pasted and modified to fit) for managing temporary files.

Note, PrepareDirectories makes an empty warc.gz file since later tasks expect a warc.gz file.

project variable

project = Project(

title = "Posterous",

project_html = """

<img class="project-logo"

alt="Posterous Logo"

src="http://archiveteam.org/images/6/6c/Posterous_logo.png"

height="50"/>

<h2>Posterous.com

<span class="links">

<a href="http://www.posterous.com/">Website</a> ·

<a href="http://tracker.archiveteam.org/posterous/">Leaderboard</a>

</span>

</h2>

<p><i>Posterous</i> is closing April, 30th, 2013</p>

"""

, utc_deadline = datetime.datetime(2013, 04, 30, 23, 59, 0)

)

This variable is used within the Warrior to show the HTML at the top of the page.

Note, this could be potentially be used to show important messages using <p class="projectBroadcastMessage"></p>. However, manual script users will not see anything related to this variable so you may want to print out any important messages instead.

pipeline variable

Here's a real chunk of code.

pipeline = Pipeline(

# request an item from the tracker (using the universal-tracker protocol)

# the downloader variable will be set by the warrior environment

#

# this task will wait for an item and sets item["item_name"] to the item name

# before finishing

GetItemFromTracker("http://%s/%s" % (TRACKER_HOST, TRACKER_ID), downloader, VERSION),

# create the directories and initialize the filenames (see above)

# warc_prefix is the first part of the warc filename

#

# this task will set item["item_dir"] and item["warc_file_base"]

PrepareDirectories(warc_prefix="posterous.com"),

# execute Wget+Lua

#

# the ItemInterpolation() objects are resolved during runtime

# (when there is an Item with values that can be added to the strings)

WgetDownload([ WGET_LUA,

"-U", USER_AGENT,

"-nv",

"-o", ItemInterpolation("%(item_dir)s/wget.log"),

"--no-check-certificate",

"--output-document", ItemInterpolation("%(item_dir)s/wget.tmp"),

"--truncate-output",

"-e", "robots=off",

"--rotate-dns",

"--recursive", "--level=inf",

"--page-requisites",

"--span-hosts",

"--domains", ItemInterpolation("%(item_name)s,s3.amazonaws.com,files.posterous.com,"

"getfile.posterous.com,getfile0.posterous.com,getfile1.posterous.com,"

"getfile2.posterous.com,getfile3.posterous.com,getfile4.posterous.com,"

"getfile5.posterous.com,getfile6.posterous.com,getfile7.posterous.com,"

"getfile8.posterous.com,getfile9.posterous.com,getfile10.posterous.com"),

"--reject-regex", r"\.com/login",

"--timeout", "60",

"--tries", "20",

"--waitretry", "5",

"--lua-script", "posterous.lua",

"--warc-file", ItemInterpolation("%(item_dir)s/%(warc_file_base)s"),

"--warc-header", "operator: Archive Team",

"--warc-header", "posterous-dld-script-version: " + VERSION,

"--warc-header", ItemInterpolation("posterous-user: %(item_name)s"),

ItemInterpolation("http://%(item_name)s/")

],

max_tries = 2,

# check this: which Wget exit codes count as a success?

accept_on_exit_code = [ 0, 8 ],

),

# this will set the item["stats"] string that is sent to the tracker (see below)

PrepareStatsForTracker(

# there are a few normal values that need to be sent

defaults = { "downloader": downloader, "version": VERSION },

# this is used for the size counter on the tracker:

# the groups should correspond with the groups set configured on the tracker

file_groups = {

# there can be multiple groups with multiple files

# file sizes are measured per group

"data": [ ItemInterpolation("%(item_dir)s/%(warc_file_base)s.warc.gz") ]

},

),

# remove the temporary files, move the warc file from

# item["item_dir"] to item["data_dir"]

MoveFiles(),

# there can be multiple items in the pipeline, but this wrapper ensures

# that there is only one item uploading at a time

#

# the NumberConfigValue can be changed in the configuration panel

LimitConcurrent(NumberConfigValue(min=1, max=4, default="1",

name="shared:rsync_threads", title="Rsync threads",

description="The maximum number of concurrent uploads."),

# this upload task asks the tracker for an upload target

# this can be HTTP or rsync and can be changed in the tracker admin panel

UploadWithTracker(

"http://%s/%s" % (TRACKER_HOST, TRACKER_ID),

downloader = downloader,

version = VERSION,

# list the files that should be uploaded.

# this may include directory names.

# note: HTTP uploads will only upload the first file on this list

files = [

ItemInterpolation("%(data_dir)s/%(warc_file_base)s.warc.gz")

],

# the relative path for the rsync command

# (this defines if the files are uploaded to a subdirectory on the server)

rsync_target_source_path = ItemInterpolation("%(data_dir)s/"),

# extra rsync parameters (probably standard)

rsync_extra_args = [

"--recursive",

"--partial",

"--partial-dir", ".rsync-tmp"

]

),

),

# if the item passed every task, notify the tracker and report the statistics

SendDoneToTracker(

tracker_url = "http://%s/%s" % (TRACKER_HOST, TRACKER_ID),

stats = ItemValue("stats")

)

)

It's pretty big.

Notice:

- the

downloadervariable should be left undefined ItemInterpolationholds some magic.ItemInterpolation("%(item_dir)s/wget.log").realize(item)executesitem % "%(item_dir)s/wget.log"which gives usitem["item_dir"]+"/wget.log"--output-documentconcatenates everything into a single temporary file.--truncate-outputis a Wget+Lua option. It makes--output-documentinto a temporary file option by downloading to the file, extract the URLs, and then set the temporary file to 0 bytes.- the use of

-e robots=offbecause robots.txt is bad --lua-script posterous.luaspecifies the Lua script that controls WgetNumberConfigValueadds another setting to the Warrior's advanced settings page

Lua Script

The Lua script is like a parasite controlling and modifying Wget's behavior from within.

- Recommended reading: Wget with Lua hooks

- Example listings: Wget with Lua hooks#More_Examples

- Reference documentation: Wget with Lua hooks (GitHub)

Generally, scripts will want to use:

download_child_phttploop_resultget_urls

download_child_p

This hook is useful for advanced URL accepting and rejecting. Although Wget supports regular expression on its command line options, it can be messy. Lua only supports a small subset of regular expressions called Patterns.

httploop_result

This hook is useful for checking if we have been banned or implementing our own --wait.

Here is a practical example that delays Wget for a minute on a ban or server overload, approximate 1 second between normal requests, and no delay on a content delivery network:

wget.callbacks.httploop_result = function(url, err, http_stat)

local sleep_time = 60

local status_code = http_stat["statcode"]

if status_code == 420 or status_code >= 500 then

if status_code == 420 then

io.stdout:write("\nBanned (code "..http_stat.statcode.."). Sleeping for ".. sleep_time .." seconds.\n")

else

io.stdout:write("\nServer angered! (code "..http_stat.statcode.."). Sleeping for ".. sleep_time .." seconds.\n")

end

io.stdout:flush()

-- Execute the UNIX sleep command (since Lua does not have its own delay function)

-- Note that wget has its own linear backoff to this time as well

os.execute("sleep " .. sleep_time)

-- Tells wget to try again

return wget.actions.CONTINUE

else

-- We're okay; sleep a bit (if we have to) and continue

local sleep_time = 1.0 * (math.random(75, 125) / 100.0)

if string.match(url["url"], "website-cdn%.net") then

-- We should be able to go fast on images since that's what a web browser does

sleep_time = 0

end

if sleep_time > 0.001 then

os.execute("sleep " .. sleep_time)

end

-- Tells wget to resume normal behavior

return wget.actions.NOTHING

end

end

- You will likely want to be cautious and include the

wget.actions.CONTINUEaction to cover a wide case. Wget may consider a temporary server overload as a permanent error. - Yahoo! likes to use status 999 to indicate a temporary ban.

get_urls

This hook is used to add additional URLs.

This example injects URLs to simulate JavaScript requests:

wget.callbacks.get_urls = function(file, url, is_css, iri)

local urls = {}

for image_id in string.gmatch(html, "([a-zA-Z0-9]-)/image_thumb.png") do

table.insert(urls, {

url="http://example.com/photo_viewer.php?imageid="..image_id,

post_data="crf_token=deadbeef"

})

end

return urls

end

It can also be used to display a progress message:

url_count = 0

wget.callbacks.get_urls = function(file, url, is_css, iri)

url_count = url_count + 1

if url_count % 5 == 0 then

io.stdout:write("\r - Downloaded "..url_count.." URLs.")

io.stdout:flush()

end

end

Useful Snippets

Read first 1 kilobyte of a file:

read_file_short = function(file)

if file then

local f = io.open(file)

local data = f:read(4096)

f:close()

return data or ""

else

return ""

end

end

Run a pipeline.py

To run a pipeline file, run the command:

run-pipeline pipeline.py YOUR_NICKNAME

Please ensure you do not run any custom code against the public tracker unless you have explicit permission from an admin. Run the tracker locally.

For more options, run:

run-pipeline --help

External Links

- Take a look at the grab scripts in recent Archive Team repositories for examples of clients.

- For more information, consult the seesaw-kit wiki.

Setting up a tracker

This article describes how to set up your own tracker just like the official Archive Team tracker just like the open-source core of the official Archive Team tracker.

Note: A virtual machine appliance is available at ArchiveTeam/archiveteam-dev-env which contains a ready-to-use tracker. A docker container is also at this fork of the tracker (unofficial).

Installation will cover:

- Environment: Ubuntu/Debian

- Languages:

- Python

- Ruby

- JavaScript

- Web:

- Nginx

- Phusion Passenger

- Redis

- Node.js

- Tools:

- Screen

- Rsync

- Git

- Wget

- regular expressions

The Tracker

The Tracker manages what items are claimed by users that run the Seesaw client. It also shows a pretty leaderboard.

Let's create a dedicated account to run the web server and tracker:

sudo adduser --system --group --shell /bin/bash tracker

Redis

Redis is database stored in memory. So, item names should be engineered to be memory efficient. Redis saves its database periodically into a file located at /var/lib/redis/6379/dump.rdb. It is safe to copy the file, e.g., for backups.

To install Redis, you may follow these quickstart instructions, but we'll show you how.

These steps are from the quickstart guide:

wget http://download.redis.io/redis-stable.tar.gz tar xvzf redis-stable.tar.gz cd redis-stable make

Now install the server:

sudo make install cd utils sudo ./install_server.sh

Note, by default, it runs as root. Let's stop it and make it run under www-data:

sudo invoke-rc.d redis_6379 stop sudo adduser --system --group www-data sudo chown -R www-data:www-data /var/lib/redis/6379/ sudo chown -R www-data:www-data /var/log/redis_6379.log

Edit the config file /etc/redis/6379.conf with the options like:

bind 127.0.0.1 pidfile /var/run/shm/redis_6379.pid

Now tell the start up script to run it as www-data:

sudo nano /etc/init.d/redis_6379

Change the EXEC and CLIEXEC variables to use sudo -u www-data -g www-data:

EXEC="sudo -u www-data -g www-data /usr/local/bin/redis-server" CLIEXEC="sudo -u www-data -g www-data /usr/local/bin/redis-cli" PIDFILE=/var/run/shm/redis_6379.pid

To avoid catastrophe with background saves failing on fork() (Redis needs lots of memory), run:

sudo sysctl vm.overcommit_memory=1

The above setting will be lost after reboot. Add this line to /etc/sysctl.conf:

vm.overcommit_memory=1

The log file will get big so we need a logrotate config. Create one at /etc/logrotate.d/redis with the config:

/var/log/redis_*.log {

daily

rotate 10

copytruncate

delaycompress

compress

notifempty

missingok

size 10M

}

Start up Redis again using:

sudo invoke-rc.d redis_6379 start

Nginx with Passenger

Nginx is a web server. Phusion Passenger is a module within Nginx that runs Rails applications.

There is a guide on how to install Nginx with Passenger, the following instructions are similar.

Log in as tracker:

sudo -u tracker -i

We'll use RVM to install Ruby libraries:

curl -L get.rvm.io | bash -s stable source ~/.rvm/scripts/rvm rvm requirements

A list of things needed to be installed will be shown. Log out of the tracker account, install them, and log back into the tracker account.

Install Ruby and Bundler:

rvm install 2.2.2 rvm rubygems current gem install bundler

Install Passenger:

gem install passenger

Install Nginx. This command will download, compile, and install a basic Nginx server.:

passenger-install-nginx-module

Use the following prefix for Nginx installation:

/home/tracker/nginx/

Change the location of the tracker software (to be installed later). Edit nginx/conf/nginx.conf. Use the lines under the "location /" option:

root /home/tracker/universal-tracker/public; passenger_enabled on; client_max_body_size 15M;

The logs will get big so we'll use logrotate. Save this into /home/tracker/logrotate.conf:

/home/tracker/nginx/logs/error.log

/home/tracker/nginx/logs/access.log {

daily

rotate 10

copytruncate

delaycompress

compress

notifempty

missingok

size 10M

}

To call logrotate, we'll add an entry using crontab:

crontab -e

Now add the following line:

@daily /usr/sbin/logrotate --state /home/tracker/.logrotate.state /home/tracker/logrotate.conf

Log out of the tracker account at this point.

Let's create an Upstart configuration file to start up Nginx. Save this into /etc/init/nginx-tracker.conf:

description "nginx http daemon" start on runlevel [2] stop on runlevel [016] setuid tracker setgid tracker console output exec /home/tracker/nginx/sbin/nginx -c /home/tracker/nginx/conf/nginx.conf -g "daemon off;"

Or, if you use Systemd, put this into /lib/systemd/system/nginx-tracker.service:

[Unit] Description="nginx http daemon" [Service] Type=simple ExecStart=/home/tracker/nginx/sbin/nginx -c /home/tracker/nginx/conf/nginx.conf -g "daemon off;"

Tracker

Log in into the tracker account.

Download the Tracker software:

git clone https://github.com/ArchiveTeam/universal-tracker.git

We'll need to configure the location of Redis. Copy the config file:

cp universal-tracker/config/redis.json.example universal-tracker/config/redis.json

Add a "production" object into the JSON file. Here is an example:

{

"development": {

"host": "127.0.0.1",

"port": 6379,

"db": 13

},

"test": {

"host": "127.0.0.1",

"port": 6379,

"db": 14

},

"production": {

"host":"127.0.0.1",

"port":6379,

"db": 1

}

}

- Now we may need to fix an issue with Passenger forking after the Redis connection has been made. Please see https://github.com/ArchiveTeam/universal-tracker/issues/5 for more information.

- There is also an issue with non-ASCII names. See https://github.com/ArchiveTeam/universal-tracker/issues/7.

Now install the necessary gems:

cd universal-tracker bundle install

Log out of the tracker account at this point.

Node.js

Node.js is required to run the fancy leaderboard using WebSockets. We'll use NPM to manage the Node.js libraries:

sudo apt-get install npm

Log into the tracker account.

Now, we manually edit the Node.js program because it has problems:

cp -R universal-tracker/broadcaster . nano broadcaster/server.js

Modify env and trackerConfig variables to something like this:

var env = {

tracker_config: {

redis_pubsub_channel: "tracker-log"

},

redis_db: 1

};

var trackerConfig = env['tracker_config'];

You also need to modify the "transports" configuration by adding websocket. The new line should look like this:

io.set("transports", ["websocket", "xhr-polling"]);

Install the Node.js libraries needed:

npm install

If you get an error while installing hiredis, you may need to provide Debian's "nodejs" as "node". Symlink "node" to the nodejs executable and try again.

Log out of the tracker account at this point.

Create an Upstart file at /etc/init/nodejs-tracker.conf:

description "tracker nodejs daemon" start on runlevel [2] stop on runlevel [016] setuid tracker setgid tracker exec node /home/tracker/broadcaster/server.js

Or, for Systemd, put this into /lib/systemd/system/nodejs-tracker.service:

[Unit] Description="tracker nodejs daemon" [Service] Type=forking Group=tracker User=tracker ExecStart=/usr/bin/js /home/tracker/broadcaster/server.js

Tracker Setup

Start up the Tracker and Broadcaster:

Upstart:

sudo start nginx-tracker sudo start nodejs-tracker

Systemd:

sudo systemctl start nginx-tracker sudo systemctl start nodejs-tracker

You now need to configure the tracker. Open up your web browser and visit http://localhost/global-admin/.

- In Global-Admin→Configuration→Live logging host, specify the public location of the Node.js app. By default, it uses port 8080.

You are now free to manage the tracker.

Notes:

- If you followed this guide, the rsync location is defined as

rsync://HOSTNAME/PROJECT_NAME/:downloader/ - The trailing slash within the rsync URL is very important. Without it, files will not be uploaded within the directory.

Claims

You probably want to have Cron clearing out old claims. The Tracker includes a Ruby script that will do that for you. By default, it removes claims older than 6 hours. You may want to change that for big items by creating a copy of the script for each project.

To set up Cron, login as the tracker account, and run:

which ruby

Take note of which Ruby executable is used.

Now edit the Cron table:

crontab -e

Add the following line which runs release-stale.rb every 6 hours:

0 */6 * * * cd /home/tracker/universal-tracker && WHICH_RUBY scripts/release-stale.rb PROJECT_NAME

Logs

Since the Tracker stores logs into Redis, it will use up memory quickly. log-drainer.rb continuously writes the logs into a text file:

mkdir -p /home/tracker/universal-tracker/logs/ cd /home/tracker/universal-tracker && ruby scripts/log-drainer.rb

Pressing CTRL+C will stop it. Run this within a Screen session.

This crontab entry will compress the log files that haven't been modified in two days:

@daily find /home/tracker/universal-tracker/logs/ -iname "*.log" -mtime +2 -exec xz {} \;

Reducing memory usage

The Passenger Ruby module may use up too much memory. You can add the following lines to your nginx config. Add these inside the http block:

passenger_max_pool_size 2; passenger_max_requests 10000;

The first line allows spawning maximum of 2 processes. The second line restarts Passenger after 10,000 requests to free memory caused by memory leaks.

Setting up Rsync and Megawarc Factory

The staging servers accept WARC files, package them up, and upload to the Internet Archive. This guide is useful for those who are setting up Rsync targets.

Note that there is a Dockerized version here. You might find that easier than setting all this up. If so, you'll want to install it and skip to #Testing_the_target

Installation will cover:

- Environment: Ubuntu/Debian

- Tools:

- Screen

- Rsync

- Git

Setup the Rsync target

The Rsync target consists of disk space, Rsync, and WARC packing scripts in a dedicated user account.

Create the system user account dedicated for the Rsync target:

sudo adduser --system --group --shell /bin/bash archiveteam

Log in as archiveteam:

sudo -u archiveteam -i

Create a place to store the uploads:

mkdir -p PROJECT_NAME/incoming-uploads/

You may log out of archiveteam at this point.

Rsync

You will need to install Rsync:

sudo apt-get install rsync

Once rsync is installed, you will need to edit the rsync configuration file. If no rsyncd.conf exists in /etc, copy it from /usr/share/doc/rsync/examples/rsyncd.conf

Rsync uses a concept of "modules" which can be considered as namespaces. If you have copied the example file, you can modify the example ftp module to fit your new project. Perhaps you may call the module after the project name.

You will also need to include:

- path = /home/archiveteam/PROJECT_NAME/incoming-uploads/

- read only = no

- write only = yes

- uid = archiveteam

- gid = archiveteam

Make Rsync start up as daemon on boot up by editing /etc/default/rsync. Ensure it reads

RSYNC_ENABLE=true

Start up Rsync deamon:

sudo invoke-rc.d rsync start

The Megawarc Factory

The Megawarc Factory are scripts that package and bundle up all the uploaded WARC files that is received.

If Git, Curl, or Screen is not yet installed, install it now:

sudo apt-get install git curl screen

Log in as archiveteam and download the scripts needed:

git clone https://github.com/ArchiveTeam/archiveteam-megawarc-factory.git cd archiveteam-megawarc-factory/ git clone https://github.com/alard/megawarc.git cd

Let's begin to populate the configuration file:

cp archiveteam-megawarc-factory/config.example.sh PROJECT_NAME/config.sh nano PROJECT_NAME/config.sh

Going through the config.sh:

- MEGABYTES_PER_CHUNK denotes how big the mega WARC files. Typically it should be set at 50GB, but if you really don't have the space, you can use smaller files like 10GB.

- IA_AUTH is your Internet Archive S3-like API authentication keys.

- IA_COLLECTION, IA_ITEM_TITLE, IA_ITEM_PREFIX, FILE_PREFIX all should have the todos replaced with the project name.

- FS1_BASE_DIR should be set to /home/archiveteam/PROJECT_NAME/

- FS2_BASE_DIR should be set to same as above or another location.

- COMPLETED_DIR should be left empty (i.e., "") if the uploaded file is to be deleted.

Bother or ask politely someone about getting permission to upload your files to the collection archiveteam_PROJECT_NAME. You can ask on #archiveteam on hackint.

Let's run the Megawarc Factory. First, create a sentinel file:

cd PROJECT_NAME touch RUN

You can run the Megawarc Factory in Screen. The 3 scripts will on separate command shells within one Screen session:

screen ../archiveteam-megawarc-factory/chunk-multiple CTRL+A c ionice -c 2 -n 6 nice -n 19 ../archiveteam-megawarc-factory/pack-multiple CTRL+A c ../archiveteam-megawarc-factory/upload-multiple CTRL+A d

Here's a few Screen pointers:

- screen -r will resume an existing screen session

- CTRL+A c creates a new command window

- CTRL+A SPACE switches to the next window

- CTRL+A " shows you a list of windows

- CTRL+A d leaves, or detaches, the screen session

To stop the Megawarc Factory, remove the sentinel file:

rm RUN

You can log out of the archiveteam account now.

Testing the target

To make sure the target is working, try a command like this:

rsync -rltvv --progress <file here> rsync://localhost/ateam-airsync/<username>

Explanation of all the options:

-r- Recurse through directories

-l- Copy symlinks as symlinks

-t- Preserve modification times

-v- Verbose mode - the more

-vs, the more verbose --progress- Shows progress

<file here>- The filename to send

rsync://localhost/ateam-airsync/<username>- The destination - rsync defaults to port 873, and the username is the username to use.

Make sure the file(s) w(ere|as) copied successfully.

Project management and leadership

Successful projects result from excellent project management.

- ProTip™: Appoint roles, delegate responsibility, manage resources, and listen to fellow volunteers.

The Flight Crew and Customers

Flying an aircraft—even for a single journey—requires collaboration and cooperation. An Archive Team project is very similar:

Pilot

- mediates issues

- adds items to tracker queue

- monitors for buggy results

Co-pilot

- writes content grab scripts

- answers technical questions

- takes the role of pilot if the pilot is missing in action

Engineer

- admins the tracker host machine

- sets up GitHub repository permissions

- set ups Rsync hosts

Attendants

- answers general questions

- troubleshoots installation problems

- triage bugs and issues

Hat-Wearing Loudmouth

- wears hats

- a loudmouth

- over 1 million followers for his cat's Twitter account

- named Jason Scott

Passengers

- runs Warrior VM appliance

- runs scripts manually

- have many IP addresses

- future potential pilots

- submits GitHub pull requests