Fileplanet

| FilePlanet | |

Website host of game content, 1996-2012 | |

| URL | http://www.fileplanet.com |

| Status | Closing |

| Archiving status | In progress... |

| Archiving type | Unknown |

| IRC channel | #fireplanet (on hackint) |

FilePlanet is no longer hosting new content, and "is in the process of being archived [by IGN]."

FilePlanet hosts 87,190 download pages of game-related material (demos, patches, mods, promo stuff, etc.), which needs to be archived. These tend to be larger files, ranging from 10MB patches to 3GB clients. We'll want all the arms we can for this one, since it gets harder the farther the archiving goes (files are numbered chronologically, and Skyrim mods are bigger than Doom ones).

What We Need

- Files! (approx. 15% done 5/10/12)

- /fileinfo/ pages - get URLs from sitemaps (Schbirid is downloading these)

- http://blog.fileplanet.com

- A list of all "site:www.fileplanet.com inurl:hosteddl" URLs since these files seem not to be in the simple ID range

- Where do links like http://dl.fileplanet.com/dl/dl.asp?classicgaming/o2home/rtl.zip come from and can we rescue those too?

How to help

- Have bash, wget, grep, rev, cut

- >100 gigabytes of space, just to be safe

- Put https://github.com/SpiritQuaddicted/fileplanet-file-download/blob/master/download_pages_and_files_from_fileplanet.sh somewhere (I'd suggest ~/somepath/fileplanetdownload/ ) and "chmod +x" it

- Pick a free increment (eg 110000-114999) and tell people about it (#fireplanet in EFnet or post it here). Be careful. In lower ranges a 5k range might work, but they get HUGE later. In the 220k range and probably lower too, we better use 100 IDs per chunk.

- * Keep the chunk sizes small. <30G would be nice. The less the better.

- Run the script with your start and end IDs as arguments. Eg "

./download_pages_and_files_from_fileplanet.sh 110000 114999" - Take a walk for half a day.

- Once you are done with your chunk, you will have a directory named after your range, eg 110000-114999/ . Inside that pages_xx000-xx999.log and files_xx000-xx999.log plus the www.fileplanet.com/ directory.

- Done! GOTO 10

In the end we'll upload all the parts to archive.org. If you have an account, you can use eg s3cmd.

s3cmd --add-header x-archive-auto-make-bucket:1 --add-header "x-archive-meta-description:Files from Fileplanet (www.fileplanet.com), all files from the ID range 110000 to 114999." put 110000-114999.tar s3://FileplanetFiles_110000-114999

s3cmd put 110000-114999/*.log s3://FileplanetFiles_110000-114999/

Mind the trailing slash.

Notes

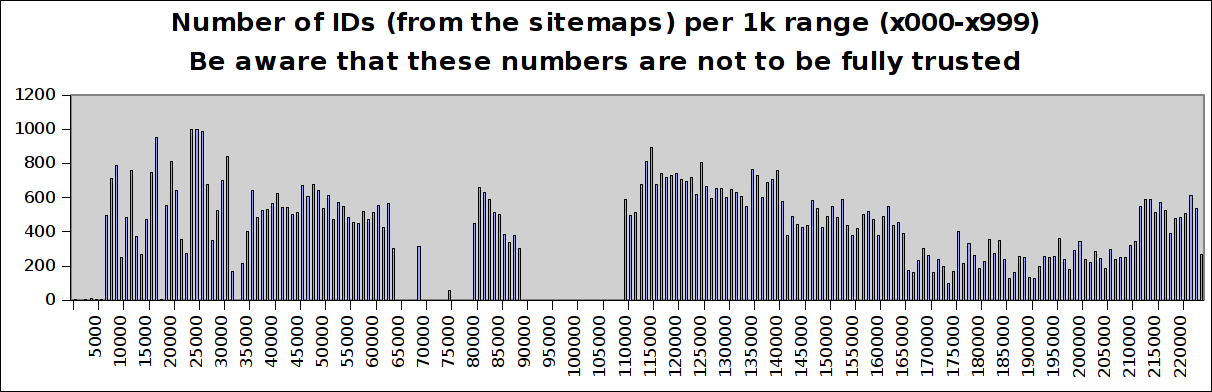

- For planning a good range to download, check http://www.quaddicted.com/stuff/temp/file_IDs_from_sitemaps.txt but be aware that apparently that does not cover all IDs we can get by simply incrementing by 1. Schbirid downloaded eg the file 75059 which is not listed in the sitemaps. So you can not trust that ID list.

- The range 175000-177761 (weird end number since that's when the server ran out of space...) had ~1100 files and 69G. We will need to use 1k ID increments for those ranges.

- Schbirid mailed to FPOps@IGN.com on the 3rd of May, no reply.

Status

| Range | Status | Number of files | Size in gigabytes | Downloader |

|---|---|---|---|---|

| 00000-09999 | Done, archived | 1991 | 1G | Schbirid |

| 10000-19999 | Done, archived | 3159 | 9G | Schbirid |

| 20000-29999 | Done, archived | 6453 | 7G | Schbirid |

| 30000-39999 | Done, archived | 4085 | 9G | Schbirid |

| 40000-49999 | Done, archived | 5704 | 18G | Schbirid |

| 50000-54999 | Done, locally | 2706 | 24G | Schbirid |

| 55000-59999 | Done, archived (bad URL) | 2390 | 24G | Schbirid |

| 60000-64999 | Done, archived | 2349 | 24G | Schbirid |

| 65000-69999 | Done, archived | 305 | 4G | Schbirid |

| 70000-79999 | Done, archived | 59 | 0.2G | Schbirid |

| 80000-84999 | running | Debianer | ||

| 85000-89999 | ||||

| 90000-109999 | Done, empty | 0 | 0 | Schbirid |

| 110000-114999 | Done, archived | 2139 | 35G | Schbirid |

| 220000-220499 | Done, locally | 250 | 35G | Schbirid |